Overview

Architecting a zero-friction payroll engine from paper to payload.

A highly relational, custom CMS built for Tauri Builders Inc. — managing 500+ workers across three provinces. This platform digitizes unstructured paper timesheets via AI to automate a fragile, error-prone, two-week payroll cycle into a reliable, verifiable workflow.

Role

Software Architect & UI/UX

Timeline

2024

Employees

500+

Provinces

3

Timesheets/cycle

~100

AI Model

Claude

Before vs After

Before

- ~100 paper timesheets transcribed manually every cycle

- Staff relied on memory for worker hourly rates

- Overtime and billing rules tracked in disconnected contracts

- High risk of human error causing delayed or inaccurate pay

After — Shipped

- Upload timesheets and process via AI in minutes

- Rates, overtime limits, and billing splits resolve instantly

- A digital twin interface lets staff verify OCR output before commit

- Automated, on-demand reporting and PDF invoicing

Platform

Eight screens. One unified system.

This is a comprehensive operational ERP, not just a timesheet app. Every screen is purposefully designed to query and mutate a central, highly relational data model securely.

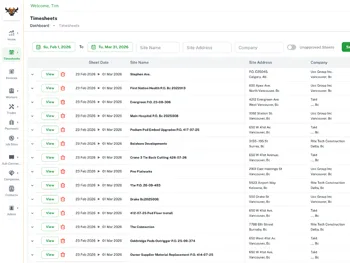

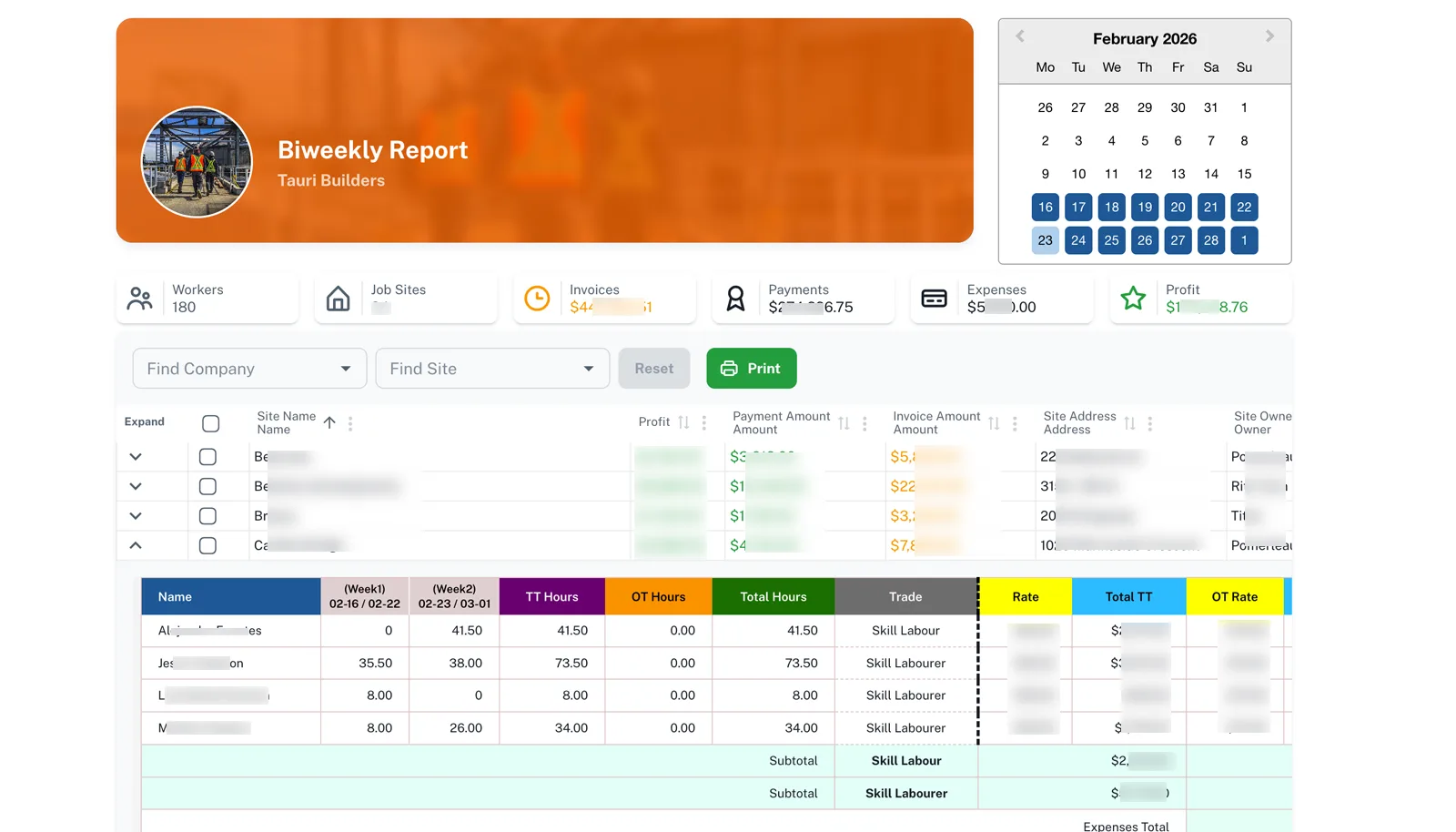

Dashboard

Billing Calendar

Timesheet List

Digital Twin

Worker Reports

Billing Reports

PDF Invoice

Records / Admin

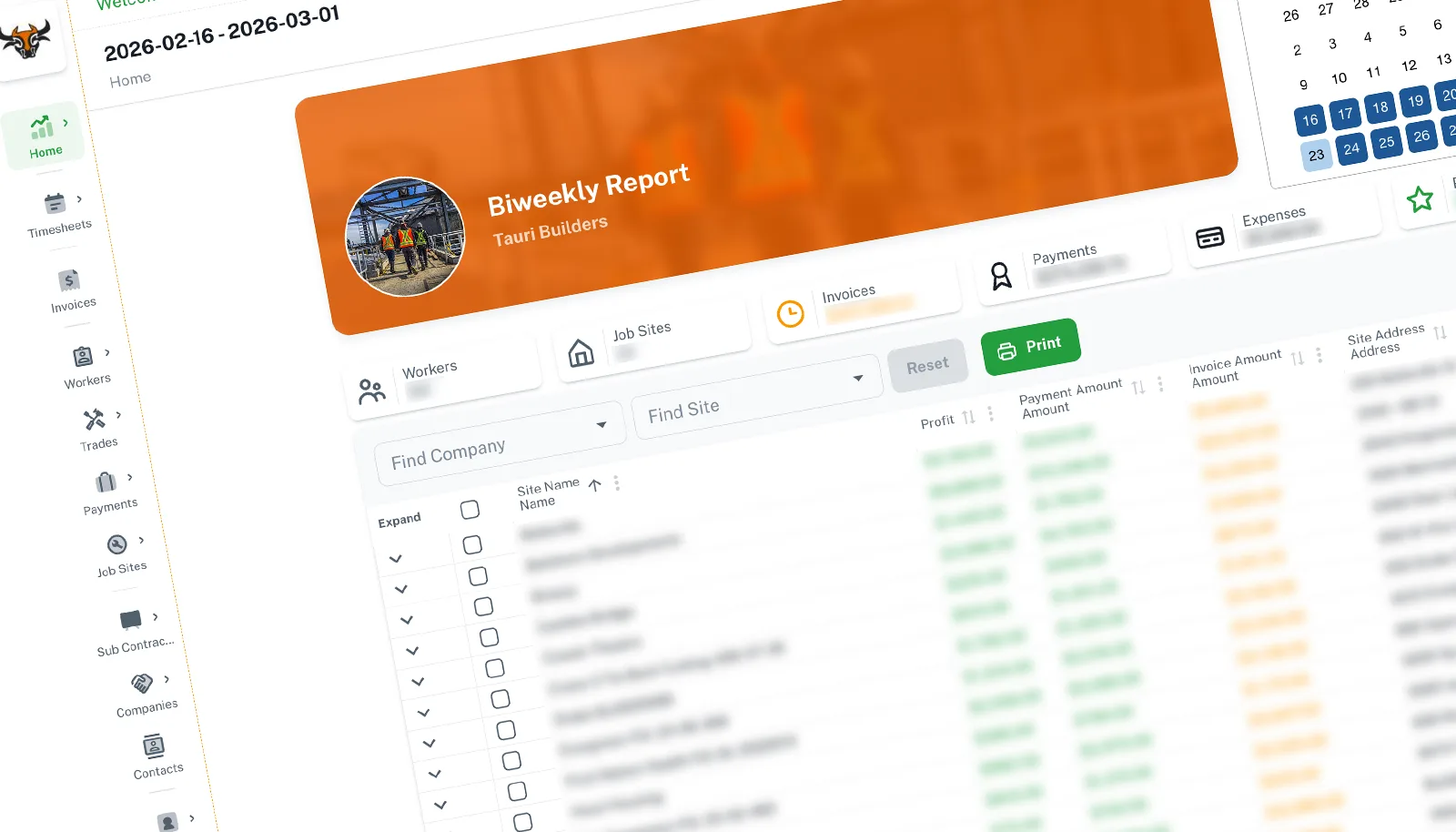

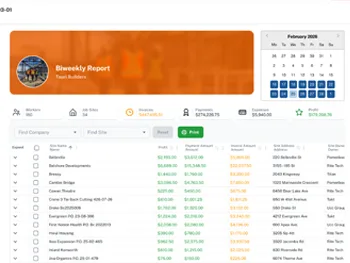

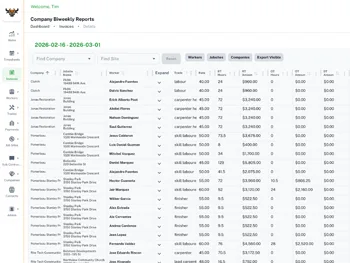

Dashboard

The payroll command centre

The dashboard delivers instant observability over active payroll cycles. Management can monitor active jobsites, worker counts, gross revenue, and projected profit margins in real-time. A data grid below allows staff to filter, select, and generate batched jobsite PDF invoices in a single click.

Real-time cycle overview with actionable data grids

Before this, assessing the health of a payroll cycle required manually aggregating spreadsheets. This view democratizes operational visibility based on live, verified database states.

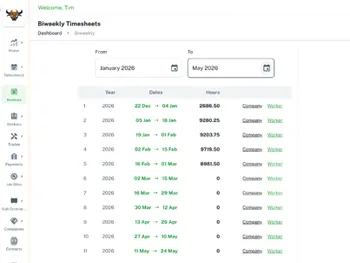

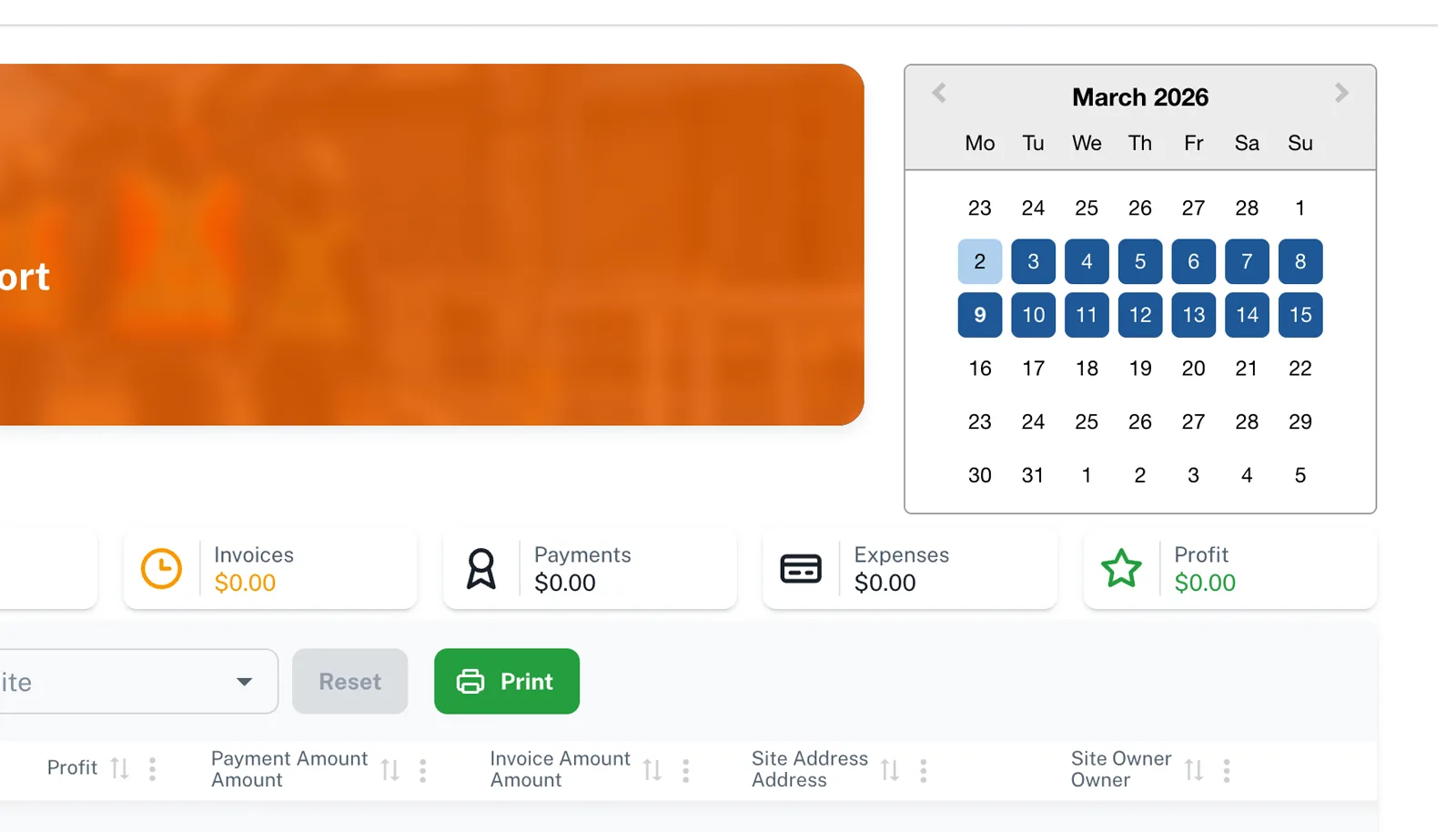

Billing Calendar

Context-aware navigation

A custom date picker synced directly to Tauri's biweekly billing logic. Clicking any date instantly selects the correct 14-day operational window, ensuring all downstream queries fetch the right payload without manual date-math.

Billing cycle calendar — hardcoded to the business rhythm

This completely eliminated off-by-one date entry errors, saving staff the mental overhead of constantly referencing physical calendars.

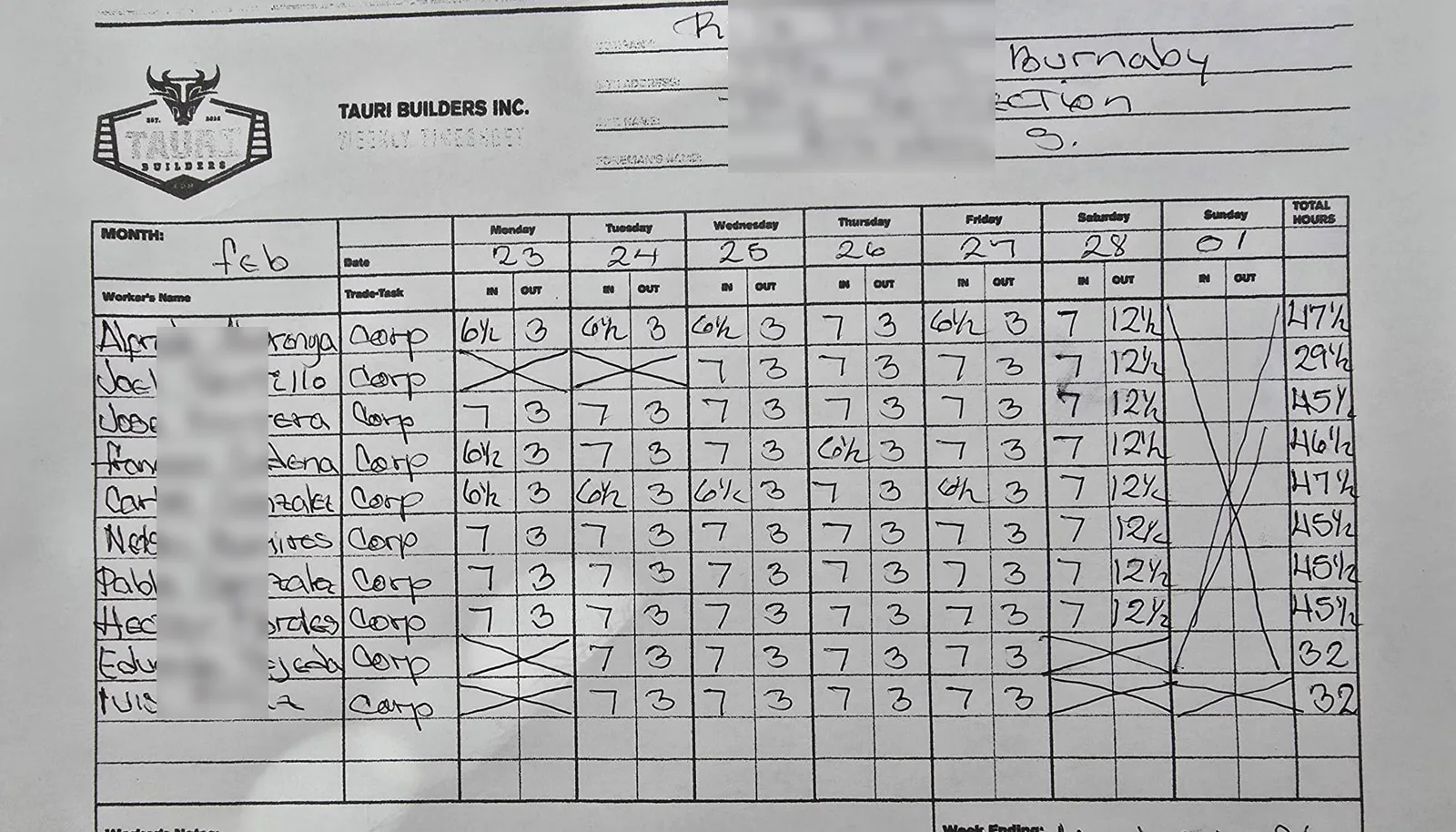

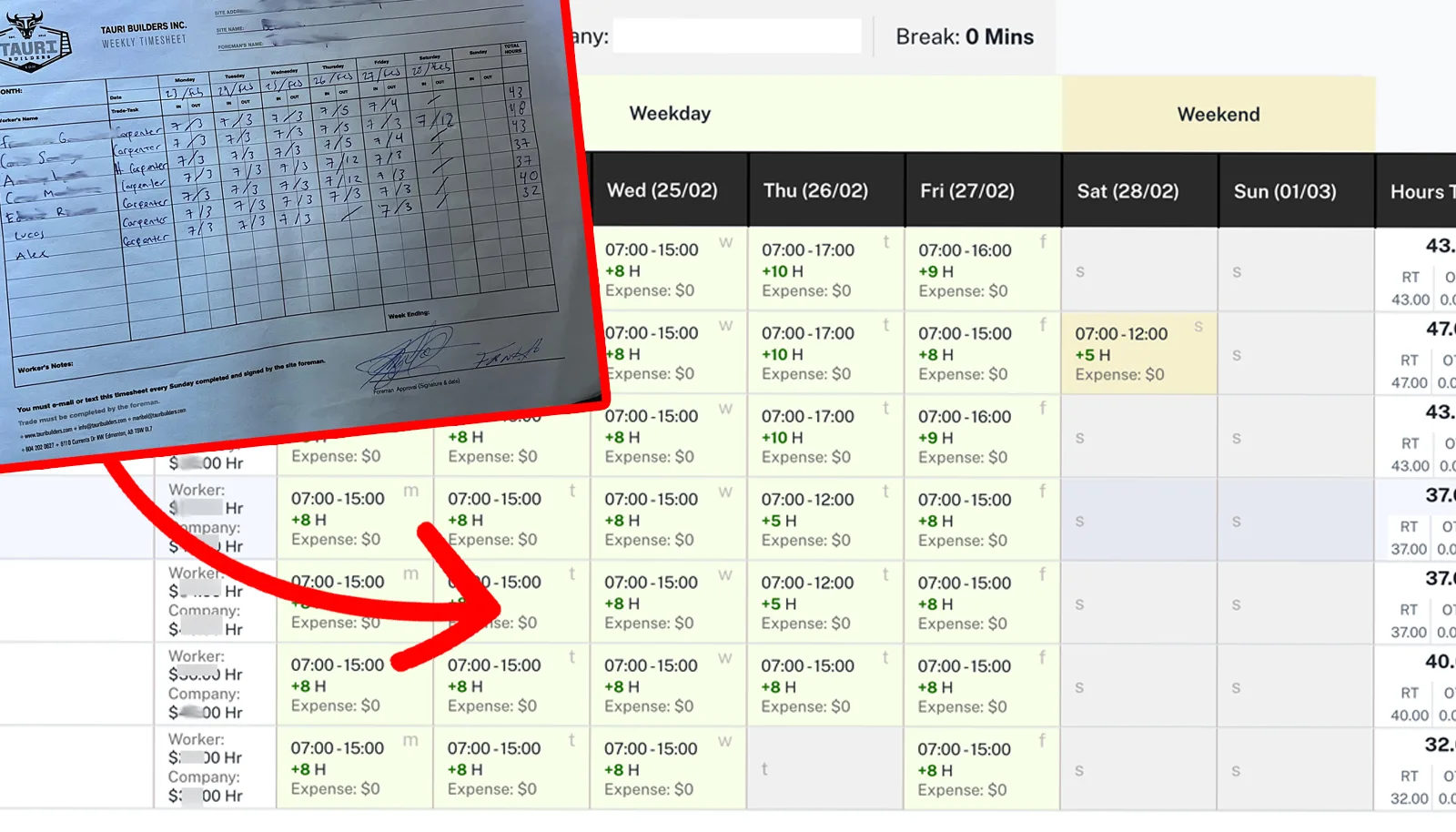

The Problem

Every two weeks, an operational bottleneck occurred. Hundreds of handwritten timesheets poured in from foremen. Admin staff had to transcribe these into Excel, manually recall base rates, apply localized overtime rules (which varied depending on the jobsite owner), and calculate precise payroll figures under strict deadlines.

The system was fragile by design. A misread rate or forgotten overtime clause directly impacted worker livelihoods or damaged client trust via overbilling. To complicate matters, worker wages and jobsite billing rates are distinct metrics. Managing this dual-ledger logic manually was unsustainable for a scaling business.

The chaotic input: highly variable handwritten timesheets

Processing this raw unstructured data was the primary workflow bottleneck. The architectural challenge was building a reliable bridge from sloppy ink to structured JSON.

"We were scrambling every two weeks. There was no room to get it wrong — these are people's paycheques — but the process made it very easy to get it wrong."

Insight

The core issue wasn't the spreadsheet software itself; it was the lack of relational data architecture. The staff were functioning as human database routers.

Architectural Realization

Every parameter required to execute payroll (wages, roles, site locations, overtime thresholds) already existed as a business rule. By modeling this relational integrity into a PostgreSQL database, we could offload the cognitive load. Staff only needed to verify hours, not calculate business logic.

Assumed

Better spreadsheet templates or macros would fix the errors.

Reality

Spreadsheets were just symptoms of a siloed data model. Business logic had to be encoded in a unified backend.

Hypothesis

Automate the relational joins. If the system knows who is where, it knows what they earn and what to bill.

Solution

A secure, full-stack CMS engineered to consume unstructured paper inputs via AI, map them to a strict relational database, and export verifiable, split-ledger financials.

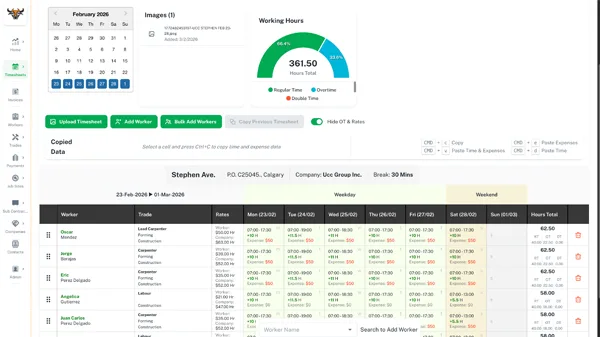

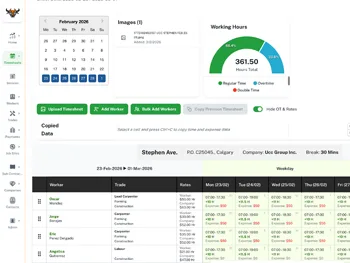

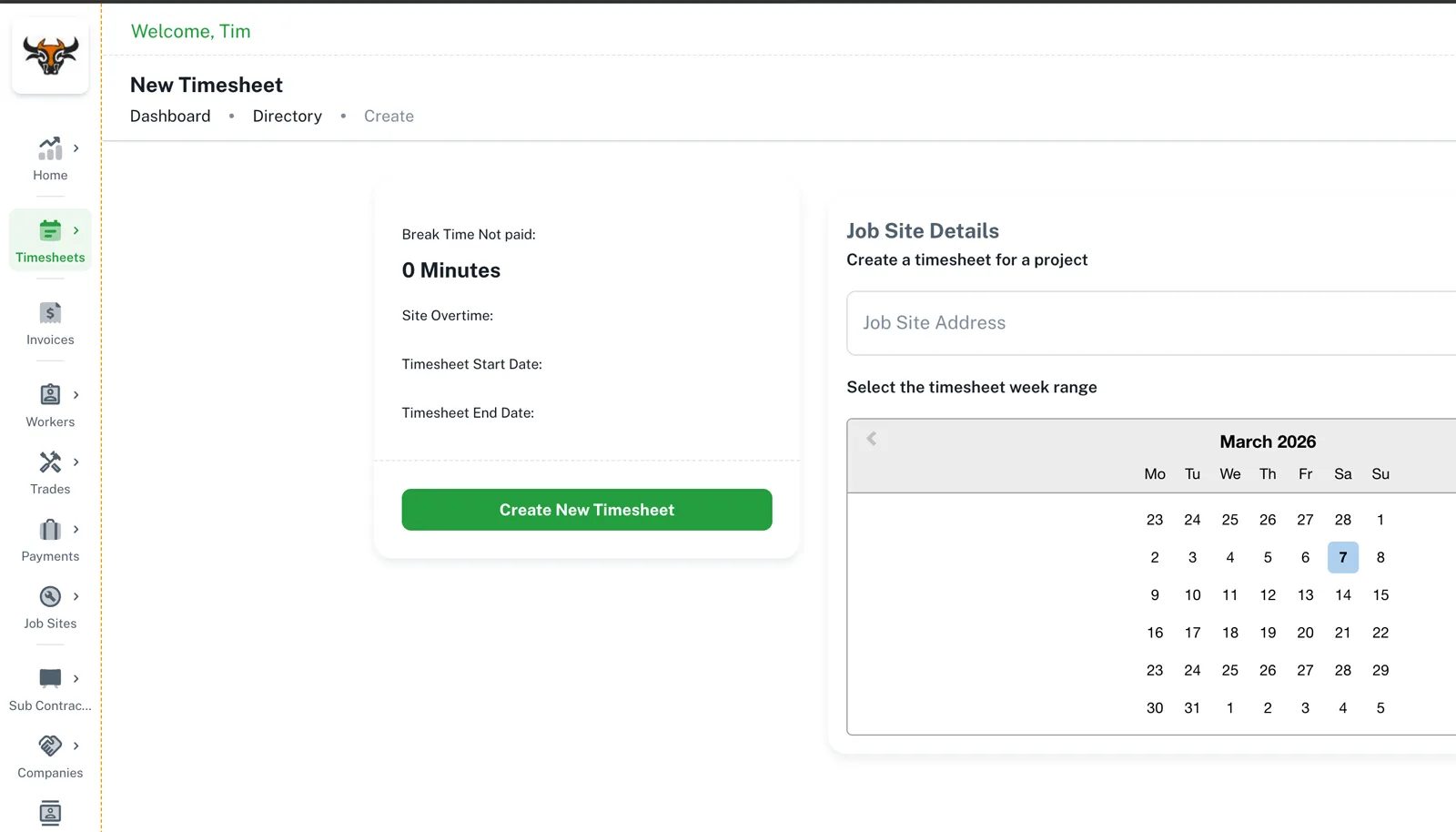

Timesheet Processing

End to end

From paper to verified payload

Upload → AI Extraction → Human Verification → Commit

The system orchestrates Claude OCR asynchronously. Once extraction completes, the UI hydrates a validation grid where staff can audit the data before executing the final database transaction.

Context Initialization via Jobsite Select

Uploading a document and assigning a jobsite acts as the root node. This single action triggers the backend to resolve the entire rate context — fetching active workers, site-specific overtime thresholds, and corresponding billing data.

The only required manual classification

By shifting the cognitive load to the system schema, users are prevented from making downstream calculation errors.

Asynchronous AI Parsing

Claude analyzes the image geometry and handwriting, returning structured JSON. A fuzzy-matching algorithm resolves handwritten names against the PostgreSQL worker records. Extracted time strings are converted to computable decimals, and the UI table populates instantly.

Data normalization output

{

"workers": [

{

"name": "John Smith",

"monday": "9:00 - 18:00",

...

}

]

}

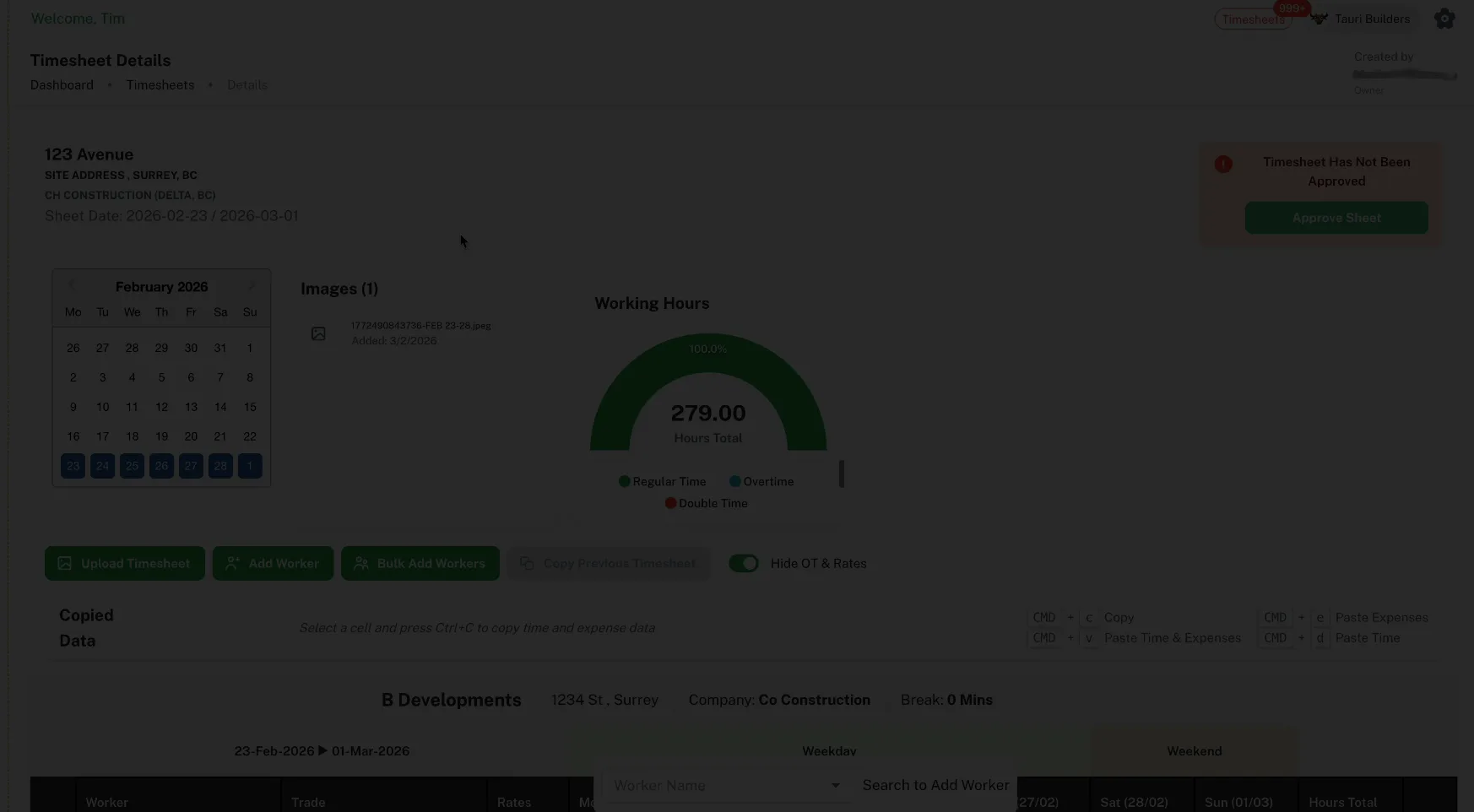

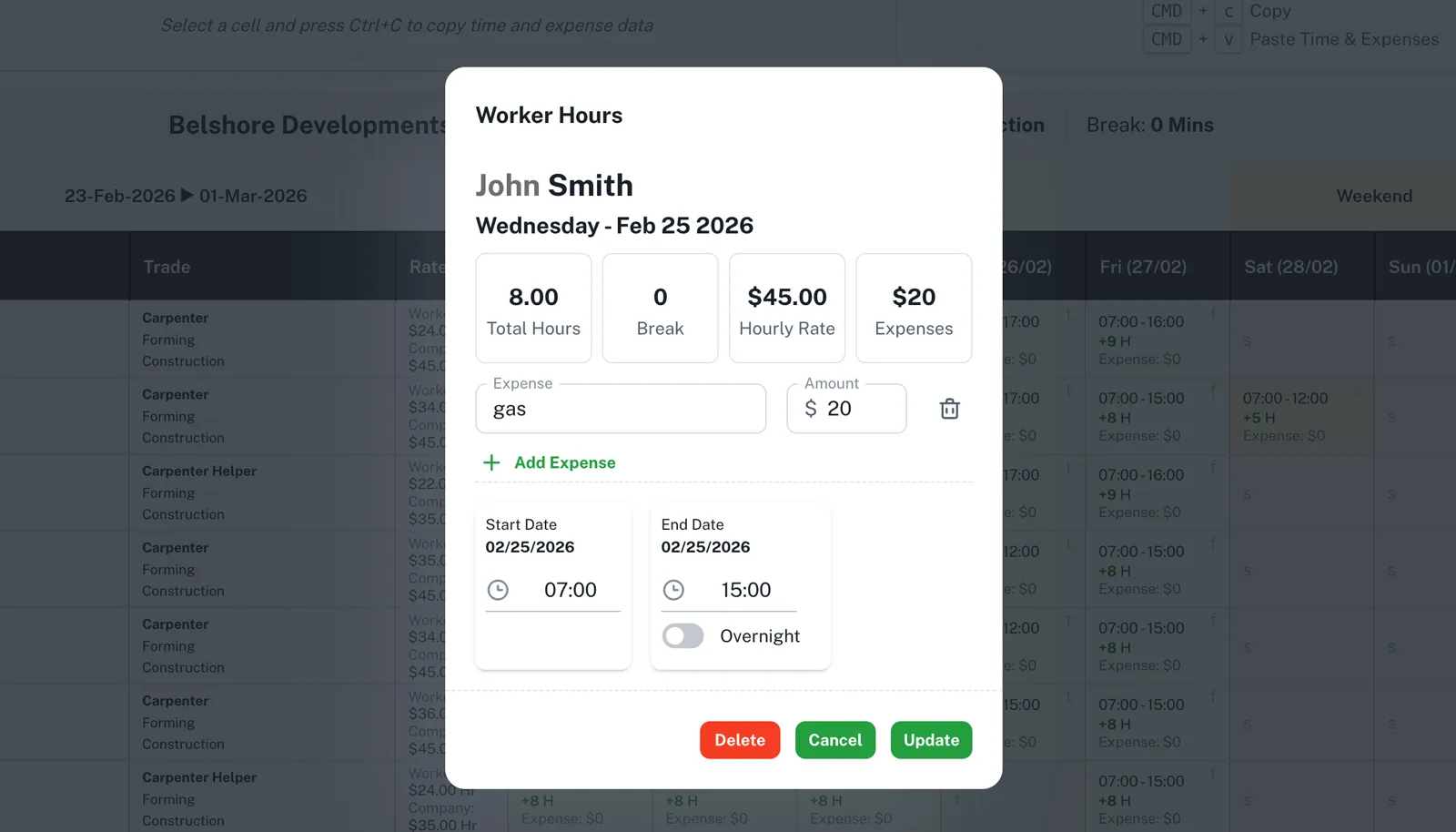

The Digital Twin Verification

The parsed data is projected into a "Digital Twin" interface mirroring the physical sheet. Staff use this high-contrast, data-dense view to spot-check AI discrepancies. All edits instantly trigger re-calculations for state totals before committing to the database.

Power-user ergonomics

Operational UIs need to be fast. I implemented global keyboard listeners to allow staff to blast repetitive time patterns across rows without touching a mouse.

The Digital Twin — designed for auditability

Adopting a 1:1 mapping with the physical artifact reduced training time to zero. The mental model remains identical, only the speed changes.

Granular day-level data mutation

Travel costs are highly variable per day based on jobsite distance. Binding expenses at the day-level instead of the week-level ensured perfect accounting accuracy.

Data Architecture

Foundation

Domain-driven schema design

The schema accurately models the business reality: workers possess multiple role assignments over time, jobsite contracts dictate unique overtime parameters, and the ledger requires strict separation between overhead cost (wages) and revenue (billing).

Relational mapping

Worker → Role → Base Wage

Client → Jobsite → Billing Rate

Jobsite → Overtime Policy

Selection → Instant validation across all axes

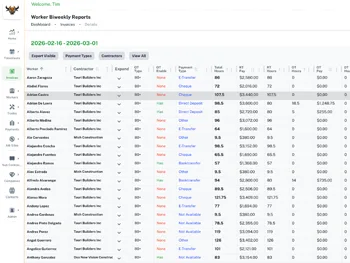

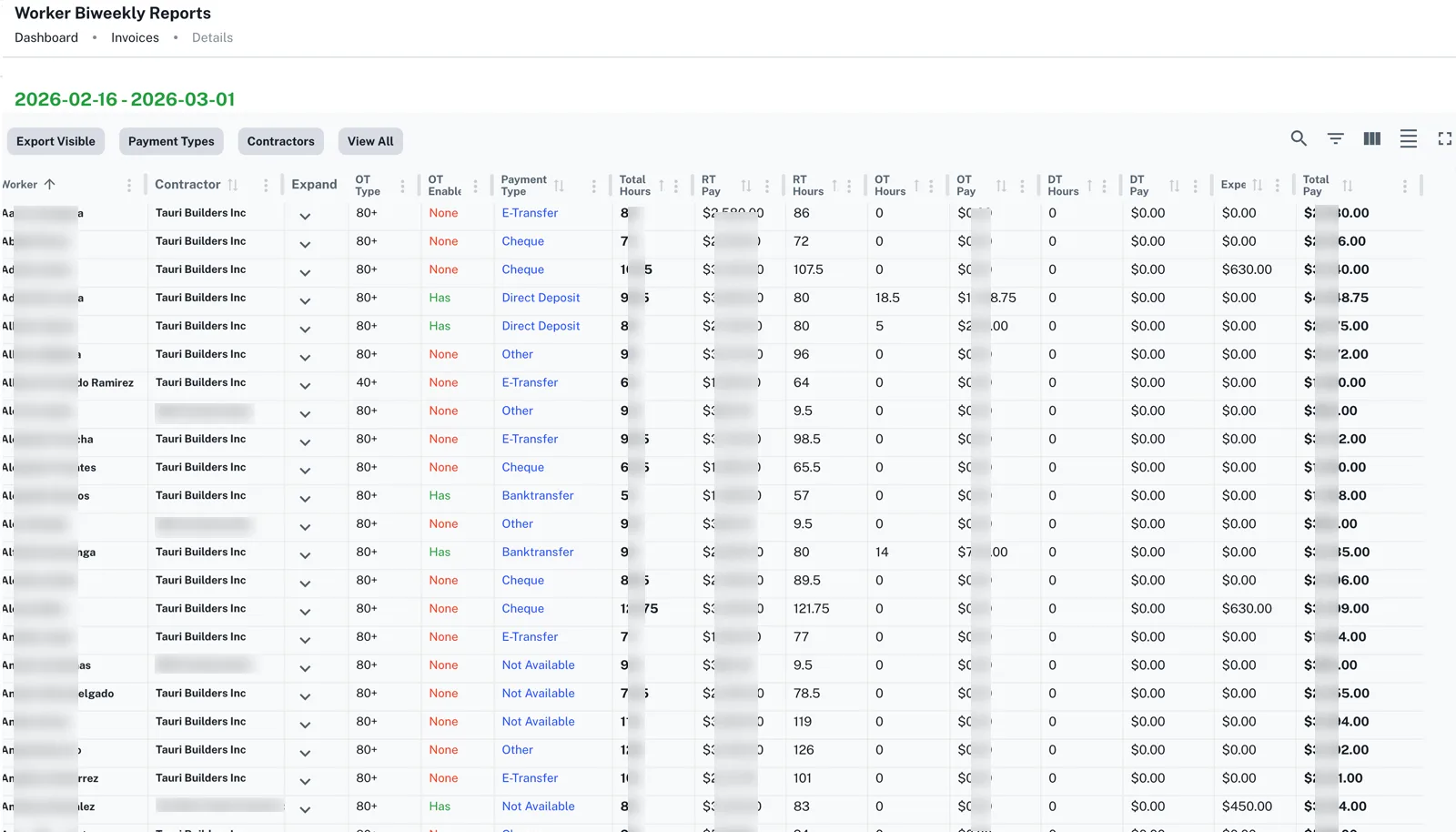

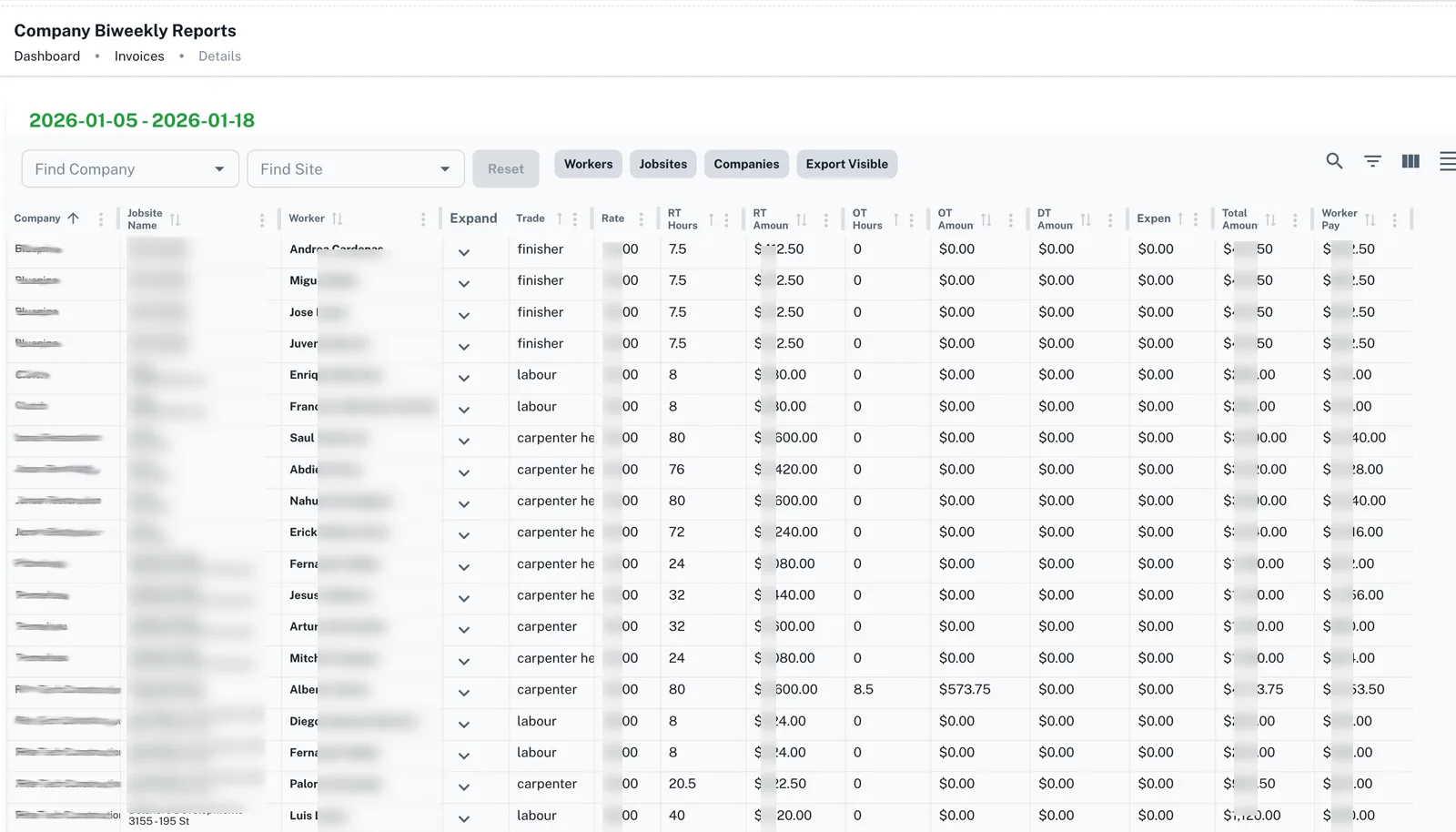

Reporting

Output

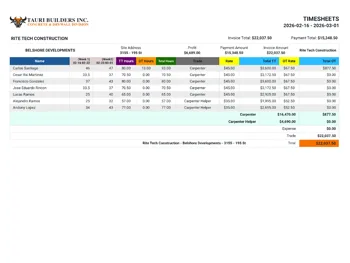

Dual ledgers from a single source of truth

Once timesheets are committed, the API generates isolated data views: one satisfying payroll liabilities, and another formatting accounts receivable for external client invoicing.

Liabilities View — optimized for accounting batch runs

Groups datasets by payout method (Interac, Cheque, Wire), allowing accounting teams to process funds efficiently without data mangling.

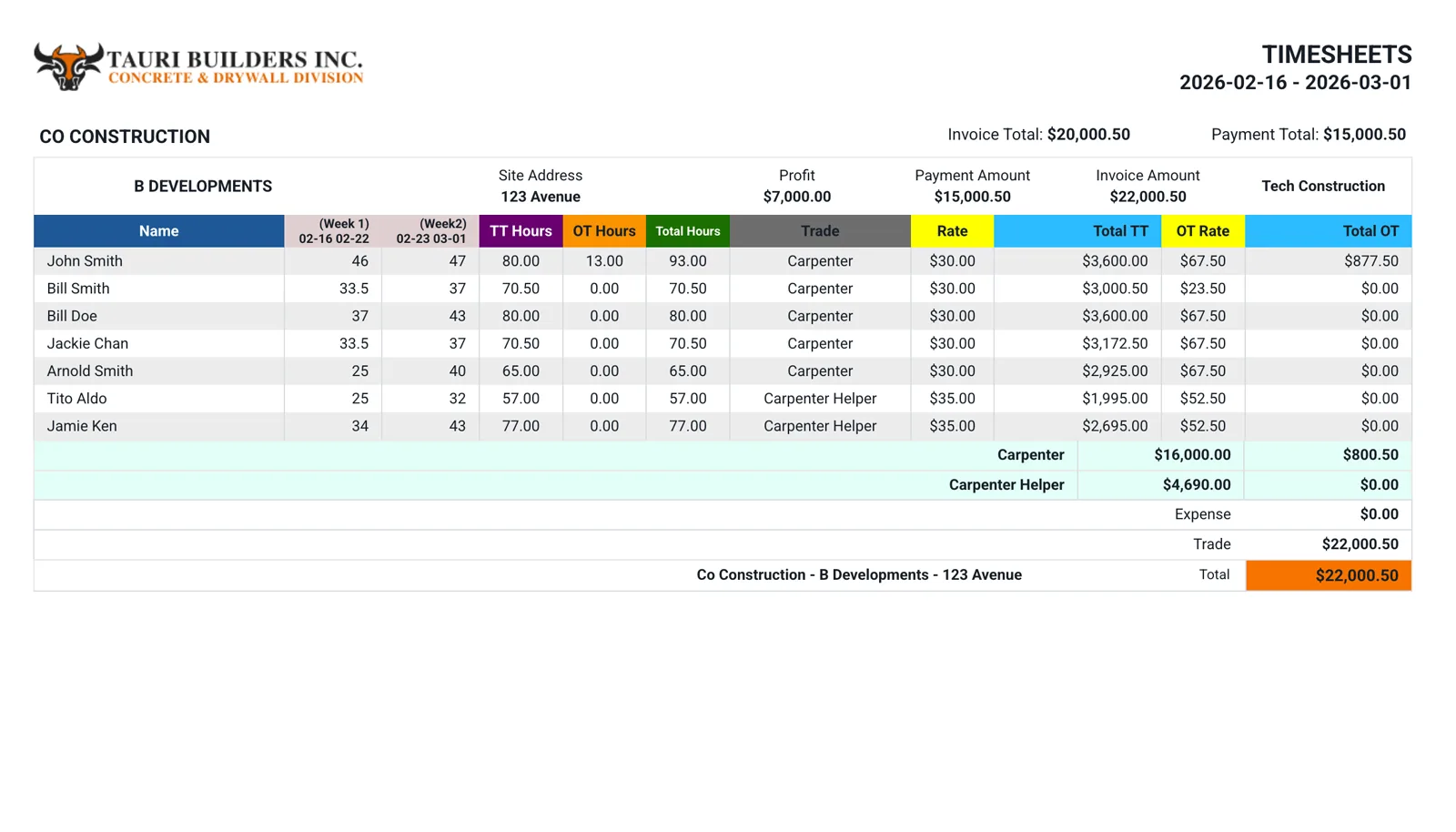

Receivables View — transparent client billing

Filters by client, breaking down complex multi-trade overtime hours into readable client invoices.

Automated PDF rendering for immediate dispatch

Generates professional, contract-compliant PDFs directly from the browser, entirely bypassing legacy spreadsheet formatting steps.

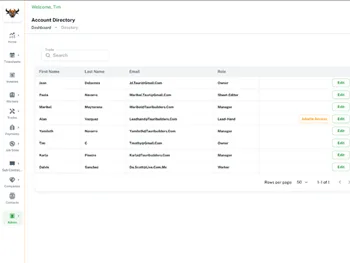

Security

Auth

RBAC and financial data masking

To unblock operational velocity, junior staff needed to perform OCR verification without being exposed to sensitive corporate margins. I implemented strict Role-Based Access Control spanning both UI rendering logic and API endpoints.

Administrator

- Unrestricted CRUD on base rates & billing margins

- Full financial analytics visibility

Payroll Officer

- Timesheet ingestion, verification, commit

- Export capabilities for client billing

Data Entry

- Document ingestion & OCR verification

API and UI block payload transmission of financial rates or revenue

My Role

End-to-end architecture & execution. I owned the entire project lifecycle from initial requirements gathering and schema design through to full-stack AWS deployment and user handoff.

Systems Design

- Mapped complex organizational logic into a normalized PostgreSQL schema

- Designed a multi-role data model to solve the issue of workers carrying concurrent billing classifications

- Architected secure RBAC layers preventing API data-leaks for lower-tier roles

Product & UX

- Conducted field interviews with admin staff to understand the true operational bottlenecks

- Designed the 'Digital Twin' UI paradigm to minimize friction for non-technical users

- Built high-productivity features like bulk keyboard shortcuts for rapid data entry

Engineering

- Developed the React/Material UI frontend and FeathersJS/Node backend

- Integrated Claude AI for complex OCR parsing, migrating from AWS Textract for higher handwriting accuracy

- Engineered fuzzy-logic SQL matching to handle inconsistent foreman name spelling

- Deployed and managed the infrastructure pipeline on AWS (EC2, Elastic Beanstalk, S3, RDS)

Impact

The core business victory was operational decentralization. By moving the complex rate logic out of an individual's head and into the codebase, Tauri Builders can now scale their operations and delegate timesheet processing without risking catastrophic payroll errors.

Furthermore, migrating from Amazon Textract to Claude AI proved vital. Construction handwriting is inherently chaotic. Claude's superior context-window analysis drastically reduced silent failures, minimizing the manual edits required in the Digital Twin interface.

Challenges & Tradeoffs

Decoupling Overtime from the Worker

A standard assumption is that overtime rules apply to a worker. At Tauri, overtime rules are negotiated into specific client contracts—the same worker might trigger overtime differently depending on the site.

Decision

Architected the schema to nest overtime policies at the Jobsite level, not the User profile. Selecting the site dynamically overrides base calculation thresholds, keeping the logic perfectly aligned with contractual reality.

The Multi-Role Dilemma

A single worker could act as a general labourer on Monday and a skilled carpenter on Thursday, each requiring different pay and billing rates. Splitting them into two user accounts would break historic tax reporting.

Decision

Built a polymorphic 'Roles' bridge table. Staff assign hours strictly via Role IDs rather than Worker IDs, preserving a single identity while allowing infinite rate variations over time.

Masking Payload Data

Delegating task-work to junior staff meant they needed access to view hours, but absolutely could not see wage rates or revenue totals over the wire in DevTools.

Decision

Applied rigorous conditional filtering at the API hook level based on the authenticated JWT scope. If the role isn't 'Admin', the backend strips all financial nodes from the JSON response before dispatching.

Learnings

Domain discovery is archaeology

I learned quickly that business rules rarely exist documented in a folder. They exist implicitly in the minds of veteran staff. Translating 'that's just how we do it' into strict schema constraints was the hardest, most valuable part of the build.

UI prototyping earlier in the cycle

I heavily prioritized the backend data modeling—which was necessary—but waiting to present the 'Digital Twin' interface meant iterating on the table UX later than I'd prefer. Next time, I would test dummy-data layouts with the end-users concurrently while building the API.

AI as a feature, not a gimmick

Using AI to parse unstructured handwriting isn't a parlor trick here; it is the core data-entry mechanism. Recognizing the limitations of OCR and deliberately building a fast, keyboard-friendly human validation step (the Digital Twin) is what made the feature actually usable in production.

Stack

The architecture prioritized operational stability, relational data integrity, and rapid deployment. I chose a mature, battle-tested full-stack approach capable of handling complex business logic securely.

Frontend Application

Backend & Data

Infrastructure & Integrations